By trade, Natalie Jeremijenko is an engineer, an environmentalist and an activist. But everything she does also achieves the condition of art. In a 1997 project called BIT Plane, she flew a camera-equipped remote control plane over Silicon Valley, capturing grainy, black and white footage of a number of large tech campuses. It is arguably the first piece of drone art ever made. This makes Jeremijenko a bit like the Marcel Duchamp of what has now become an entire artistic genre that takes as its singular subject the Unmanned Aerial Vehicle. Jeremijenko’s work on drones continues to this day. With the Environmental Health Clinic at NYU, she is exploring ways of deploying unmanned technology for environmental rehabilitation. A fierce critic of the U.S.government’s use of drones for targeted killing, Jeremijenko is seeking new ways of understanding this technology. She wants us to completely disassociate ourselves from what she takes to be a narrow and unimaginative view of drones and their potential.

Jeremijenko is currently an associate professor at the NYU Steinhardt School of Culture, Education, and Human Development. Previously, she taught engineering at Yale. Her work has appeared in galleries internationally, and she has collaborated on numerous books, studies and websites, including the Bureau of Information Technology, The Environmental Health Clinic, howstuffismade.org and Experimental Design. In June, Jeremijenko was profiled by New York Times Magazine, and in October she was a keynote speaker at the Wired 2013 conference.

Interview by Lenny Simon

Center for the Study of the Drone So Natalie, what exactly do you do?

Natalie Jeremijenko I run the Environmental Health Clinic at NYU, where I’m faculty. My work is to do with seizing the opportunities that new technologies provide for social and environmental change—desirable change.

Drone In 1997, you made BIT Plane, and that was before drones were in the news at all. What was your thinking behind the project, and what were you trying to achieve at the time?

Jeremijenko The BIT Plane project was an exploration of this strange new information sphere. We equipped radio-controlled airplanes—they weren’t even called UAVs at that point, let alone drones—with cameras, and we flew around the Silicon Valley. Which is an area that’s not only named for being kind of a hot spot for information technology, but it’s there because of the flight technology. It was good for flying. That’s why the military technology was there, that’s why the vendor base—all the companies that made electronic equipment for the military—is there, and that’s why the information and microprocessing industry built up there, on the basis of the flight conditions. So BIT Plane was an exploration into this new territory I called “information space.” Where ideas about information were being played out on the ground, so to speak, tangibly changing what places looked like, the geography.

What was peculiar was all these corporate campuses—Sun Microsystems, Xerox PARC, Interval Research, SRI (i.e. Stanford Research Institute)—they were all no-camera zones. You couldn’t take cameras in there. The idea that motivated the elaborate security, and the signing in and surrendering any camera at the security desk, was that if you took a camera in there, you could somehow steal intellectual property. And that didn’t really sync with my idea of what ideas, thinking, intellectual property is, right? Information is a property of people and communities and discussions, and actual work, it’s not something you can just take a picture of and steal. But that was the paradigm, and it actually remains the prevailing paradigm, that information is property, that it can be stolen with cameras. So flying the BIT Plane through these no-camera zones was part of the exploration of what you could actually just see. What could you actually see? What information could you take from the plane? Of course, the answer is not much (as contemporary drones have so aptly demonstrated)—lots of images but not much actual trustable information.

And that didn’t really sync with my idea of what ideas, thinking, intellectual property is, right? Information is a property of people and communities and discussions, and actual work, it’s not something you can just take a picture of and steal. But that was the paradigm, and it actually remains the prevailing paradigm, that information is property, that it can be stolen with cameras. So flying the BIT Plane through these no-camera zones was part of the exploration of what you could actually just see. What could you actually see? What information could you take from the plane? Of course, the answer is not much (as contemporary drones have so aptly demonstrated)—lots of images but not much actual trustable information.

Drone How do you reconcile the ideas of open access and freedom of information with the idea of drones, and the ability to spy on people at any time, and the potential loss of copyright? Does that scare you, or do you see that as a kind of new, novel way of dealing with old problems?

Jeremijenko Drones are invasive, but I don’t think of them as an invasion of privacy. The absolute senselessness of drone-generated images and the fire hose of drone-generated images that we’re now facing just doesn’t have much sense. This is what needs to be re-asserted: seeing people talking, or even their laundry, does not provide evidence for “imminent threat,” it shows that mostly people are tied up, bound in daily activities of life. You can learn who puts out the washing, but not what they are conceptualizing while doing so. It is just inaccessible—and any “intelligence” that claims the ability to access is lying.

More importantly, the question is: for what are drones being used? And what opportunity do they actually present?

I suppose I take the quite controversial position that I don’t have a problem so much with targeting. If drones are used primarily as the tool for targeted killing, I don’t have a problem so much with the targeting, but I have a big problem with the killing part of targeted killing.

When it comes to drones, it is a tremendous cultural challenge to ask, Can they do something productive? And I’m afraid that surveillance, even for agricultural or environmental purposes, though often touted as a good use of drones, is pathetic, right? It doesn’t give you the on-the-ground uses of knowledge to be able to address the environmental issues that they’re supposedly surveilling, and it doesn’t give you the capacity to act in a productive and constructive way, unless you are on the ground and working that agriculture or fishery. So my concern about drones is not that they are somehow invading privacy. Take all the images you like; I don’t see what sort of sense you can make of them. My real concern is the larger cultural challenge that we face: can we use these constructively?

Drone And do you think that it’s actually possible to use a drone constructively, or do you think that it’s just the wrong tool for the wrong job?

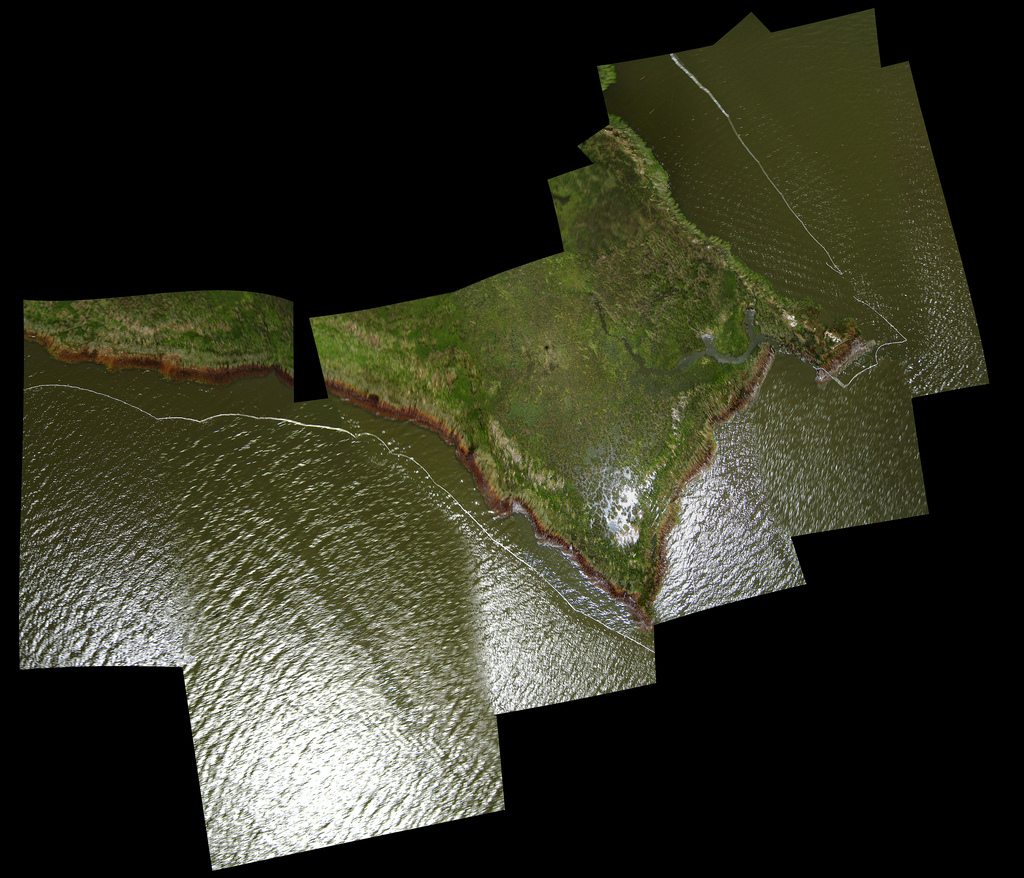

Jeremijenko I have a wonderful former student who’s absolutely adamant that the remoteness and the resource-intensiveness of drones—this is Jeff Warren who founded and runs something called Public Lab and has used non-remote surveillance (or a sort of sousveillance)—by definition prevents them from being useful to a community, and by extension, then, that drones are by definition bad. He uses kites and $15 polystyrene toy planes to generate aerial images useful to particular communities. For example, images of the spill in the Gulf at 10000x higher resolution than what was available on Google Maps: compounded  stitched images covering swathes of land with which people could actually see oil covered birds and other organisms not visible from Google Maps until, that is, they licensed the images of Public Lab. A licensing agreement was signed. even though Public Lab didn’t want or need this legal agreement. Public Lab images are freely available to the public. But this annexing into “Googleland” garnered no funding or support for the community groups who generated these images, and did not generate support for the cleanup they were coordinating; that is, what happened on the ground, where productive work is done. Google made these available to many others to spectate, primarily, to make these locally generated images remote images. I haven’t followed up in detail with the groups involved in generating the images in the first place, but by the time Public Lab’s images were posted, agreements signed—i.e. Google forcing Public Lab to treat these images as “property”—the clean up efforts were well underway, and spectators can’t really and don’t feel compelled to act with out-of-date information.

stitched images covering swathes of land with which people could actually see oil covered birds and other organisms not visible from Google Maps until, that is, they licensed the images of Public Lab. A licensing agreement was signed. even though Public Lab didn’t want or need this legal agreement. Public Lab images are freely available to the public. But this annexing into “Googleland” garnered no funding or support for the community groups who generated these images, and did not generate support for the cleanup they were coordinating; that is, what happened on the ground, where productive work is done. Google made these available to many others to spectate, primarily, to make these locally generated images remote images. I haven’t followed up in detail with the groups involved in generating the images in the first place, but by the time Public Lab’s images were posted, agreements signed—i.e. Google forcing Public Lab to treat these images as “property”—the clean up efforts were well underway, and spectators can’t really and don’t feel compelled to act with out-of-date information.

I would argue that drones do have some interesting characteristics that are being exploited by the military. But what I’m working on is figuring out what we can use drones uniquely. And certainly, whether you’re in Pakistan or Afghanistan or Bard or New York City, we all face these tremendous challenges in redesigning our relationship to natural and open systems in a way that the 21st century demands. What I’m doing research on is how we could structure that participation in a demilitarized way, but nonetheless a constructive and productive way. Demilitarization of a technology is usually a complex technical, social and political challenge.

Drone If you were going to do a BIT Plane project today, how would it be different?

Jeremijenko I am working on a sequel of BIT Plane. And it’s largely about this work of figuring out what we can use drones for. First of all, we’re seizing the wonder of flight, taking it back, and finding that visceral engagement. We’re refusing, if u will, to “unman” flight. What fun is unmanned flight? We made a set of what I call “prosthetics for the imagination,” which are strap-on flight simulators that you put on your hand. Then you stick your hand out the window of a car so you use your car as a portable wind tunnel and practice your flight maneuvers. It’s a kind of manned flight.

We also made a wing swing that’s based on some of the aerofoil designs for drones (and the flying squirrel’s flight system: the most extraordinary aerodynamics that make drones look altogether clumsy). Using ziplines and 16-foot wings you literally fly like superman, you feel the gentle phenomenon of lift, and experience fast, emissionless, inexpensive urban transportation.

And then there’s Petbot, which is a slightly schizophrenic robotic documentation tool with perpetual amnesia—he overwrites himself all the time—and he’s crazily documenting your life so that you’re not self-documenting, in the sense of the traditional cinematic contexts. These projects have a very different structure of participation than the kind of surveillance that we use drones for.

I have a burgeoning collaboration with a group in Pakistan, where we’re exploiting these robotic platforms to improve environmental health, improving air quality, soil biodiversity, water quality, food systems. The idea is that they’re doing these small test runs there and I’m doing some here, in New York and in London, and we’re comparing them. They exploit these robotic platforms for what they’re good at doing. But there’s also a charisma about—let’s call it the drone point of view, right? This largely robotic view that’s actually very sympathetic. With the camera on the bot, the viewers can’t help but feeling sympathetic. You actually inhabit that position, either virtually or physically, to the extent that you can really look at the world differently, through this robotic armature. I think that it is thrilling, interesting, and potentially very productive.

to improve environmental health, improving air quality, soil biodiversity, water quality, food systems. The idea is that they’re doing these small test runs there and I’m doing some here, in New York and in London, and we’re comparing them. They exploit these robotic platforms for what they’re good at doing. But there’s also a charisma about—let’s call it the drone point of view, right? This largely robotic view that’s actually very sympathetic. With the camera on the bot, the viewers can’t help but feeling sympathetic. You actually inhabit that position, either virtually or physically, to the extent that you can really look at the world differently, through this robotic armature. I think that it is thrilling, interesting, and potentially very productive.

With a drone, the first time you’re up there flying, and your controls are facing one way and you’re not sure which way the image is facing or which way the vehicle is facing, and so you’re constantly getting dislocated and yet you’re seeing yourself and seeing your neighborhood, it’s a thrill. There’s a fascination about the capacity we have to take other points of view, which I think is very intellectually productive, and just seductive. It’s just fascinating to look at. But what we do with that view is then the question.

Drone Right, there’s a real difference between using that view to explore your world and using that view to, say, take somebody out.

Jeremijenko Exactly. So the structure of participation or the structure of accountability—how much we can delegate our responsibility or our accountability for the actions that the robotic platform takes—is the thing at stake. So although the pilot may be removed from the platform itself physically, they are in no way completely removed from it. Consider the post-traumatic stress, the terrible consequences of being a drone pilot (Peter Asaro’s paper on this is great); in no way is the pilot’s accountability severed. It is a militarized position that Thoreau described: “the mass of men serve the state thus, not as men mainly, but as machines, with their bodies.”

If technology represents us (and vice versa) then what is encouraging about drones is that they demonstrate how good people really are, as a rule of thumb. The trained soldier, paid–not very well–to kill has difficulty doing violence without distance, remove and remoteness. Drones are not cheaper when they’ve done the actual costings, but just like the soldiers in the Trenches of WW1 the problem drones actually address was firing up into the air–perhaps at imminent future of militarizing airspace. Because they couldn’t/wouldn’t kill on command. Drones, and their cronies, really humanize soldiers and military contractors, and yet they don’t protect them from the guilt, PTSD or ugly moral kickback born not by the state but by the operators, victims and their families and communities.

Drone You seem to make a distinction not only in the way the technology is used, but in the technology itself, and I’m wondering, do you put technology into categories? Is there friendly technology on one hand, and unfriendly technology on the other? How do you think about the different ways that we interact with technology?

Jeremijenko I use a very simple analytic framework, and that’s that a video camera is a technology, but unless you understand the video camera in the context of the structures of participation, you don’t know what it really is. Take, for example, a video camera in a shopping mall. You can’t go and have a look at that camera footage and check how you look from behind in the jeans that you’re trying on. You don’t have have access to the data; you can’t interpret the data or use that data. For me, when you don’t have access, or the capacity to analyze the data, that changes that structure of participation. That’s a structure of participation that turns the technology—the video camera—into a surveillance technology.

Whereas if I’m looking at you from my window, that’s voyeurism; that’s a structure of participation that’s different from a worker or a corporation surveilling customers. The idea of understanding the technology very much has to do with the structure of participation within which it’s used. The military structure of participation is very different from the civic structure of participation. If you understand that the structure of participation is one in which you’re accountable to the people that you’re working with, that helps to demilitarize the technology. Of course, in a military context, responsibility is dislocated from the person, propagating up to the next rank. An engineering professor at West Point in a similar position to me—we taught the same  courses when I was on faculty of engineering at Yale—got fired because of an off-color presentation one of his students gave. At the time I was baffled, not only counting the several times I would have been fired but the idea that he was responsible—it just doesn’t make sense to citizens. If we teach one thing in academic careers it is that one takes responsibility/authorship of one’s work. But West Point isn’t really a university. It is just like the drones built to serve the same structure of participation/institution– unmanning men or people, so that they are not responsible, so they carry out other peoples orders.

courses when I was on faculty of engineering at Yale—got fired because of an off-color presentation one of his students gave. At the time I was baffled, not only counting the several times I would have been fired but the idea that he was responsible—it just doesn’t make sense to citizens. If we teach one thing in academic careers it is that one takes responsibility/authorship of one’s work. But West Point isn’t really a university. It is just like the drones built to serve the same structure of participation/institution– unmanning men or people, so that they are not responsible, so they carry out other peoples orders.

Drone Do you think that technology is always political, or can it be neutral, and where do drones fit in? Do you see those structures of participation being independent from the technology itself, or are they completely connected to it?

Jeremijenko As a rule of thumb, we should think of technology as a profoundly conservative social source. By definition, it is resource intensive. It costs a lot of money and time to build a technology; therefore, technology is built in the interest of those with money and time. Hence so much military influence on our technology. The things that work on our side are these unintended consequences, the complex systems that technology is a part of, these techno-social systems that are not controllable or completely comprehensible. So the unintended consequences can be exploited by those of us with fewer resources and a commitment to a participatory democracy.

There is a pervasive phrasing “the impact of technology”; this insidious idea that technologies impact us like a meteor. You know, actually, we get the technologies we deserve, because we produce the technologies and to paraphrase JFK, don’t ask what technology can do for you, ask what you can do for technology. So I think the cultural challenge is to take these technologies and figure out how to use them to create a desirable future. That’s the fundamental participatory demand. We are better than drones make us look, and we have to make them better than that.

[includeme file=”tools/sympa/drones_sub.php”]

Use of drone technology as a weapon’s platform or an instrument of execution or fear generating or hatred engineering serves the function of alienation of foreign people all too well. As a political tool, it is all too short lived. Similarly, survivors learn, adapt, limited by their creativity begin the snare.

This gives rise to several questions, most unpleasant questions in an age of head bopping indifference but as the professional, shop keeper, managerial and educated classes of people join the impoverished in this trend – the legal servant system follows. This, this America now is the age of fear and drones, as weapons, service fear and arms length executions all too well.

That said, I reiterate my earlier argument. The applications to which drones are put as an extension of the political resolve – where there is such – is more to the point. Drone policy appears to be an extension of multinational corporate policy to produce a profit. Exporting the manufacturing heart of America to a perilous interdependent world trade system brings dirt cheap products at a time when debt in America reigns supreme.

Drone technology, particularly for warfare, will not abate. This is the dawn of the terminator, their intelligence and integration into a technical world will be gifted by some of the most brilliant minds available. Is that what you want? Current policy serves to extend barriers to competition and use of national airspace by agencies without the Constitutional power to make law. The FAA acts to regulate business as a barrier to entry without accountability but with the force of civil asset seizure using the prosecution of the state, the federal state. Constitutionally, this is a power reserved to the states and only the Interstate Commerce Clause and judge made law enable its workings. Judges should thus be scrutinized in making policy where their accountability and authority is lacking.